Expert system has quietly taken its location amongst building teams. It does not wear a construction hat or check in at the website trailer, but it’s working– designing layouts, forecasting hold-ups, operating equipment, flagging safety risks, and even handling structures long after turnover.

Associated Research study

AI’s footprint on jobsites is growing quickly. So are its risks, and the law seems to be playing catch-up.

Building agreements, by and big, don’t address the use of AI, which is ending up being dangerous. Why? Because when algorithms begin making choices– especially the kind that affect budget plans, timelines, security, and design stability– the concern is no longer whether they’re practical, however who gets sued when they’re incorrect.

In light of this apparent inevitability, if AI belongs to the project group, it requires to be represented in composing.

The Expanding Function of AI in Construction Workflows

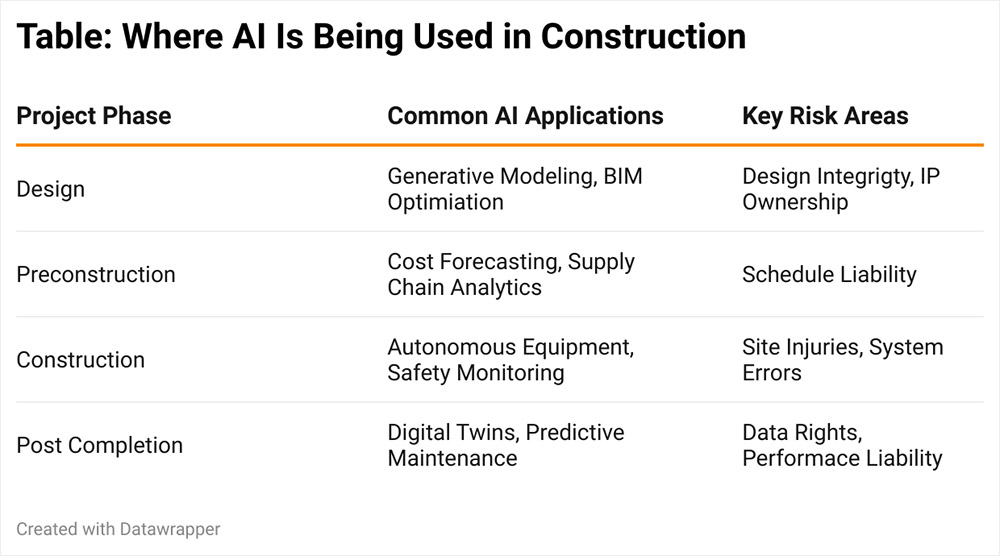

AI has moved well beyond the hypothetical in construction. Across every stage of a project, the innovation is progressively embedded in core processes.

In the design stage, AI-enhanced tools suggest ideal structural layouts and material mixes, often incorporated within Building Details Modeling (BIM) platforms. Throughout preconstruction, historical information is leveraged by algorithms to anticipate project timelines, find supply chain threats, and expect cost escalations.

AI doesn’t wear a hard hat, but it’s already part of the crew.

On active jobsites, self-governing equipment can now perform excavation, bricklaying, and assessments with AI-assisted accuracy. Computer vision systems keep track of safety compliance, recognizing PPE offenses and flagging risks in genuine time. After task conclusion, AI-driven digital twins can continue managing and enhancing building efficiency.

These tools are no longer passive assistants; rather, they’re decision-makers– which brings profound legal and contractual ramifications.

Where Traditional Building Contracts Fail

One of the most glaring gaps in basic building and construction agreements is the absence of instructions when AI-driven tools breakdown or produce incorrect outputs. Consider a circumstance where a generative design system proposes a structurally unsound layout that a designer follows in excellent faith. If the result is a failure, who bears responsibility– the designer, the contractor, or the software service provider? Without express contract language, the outcome might depend upon unpredictable litigation.

48B

That’s the estimated worldwide market price of AI in construction projected by 2030.

Basic type agreements, consisting of widely used files from the AIA and ConsensusDocs, were not prepared with AI in mind. As a result, they stop working to represent crucial questions such as who is accountable for selecting and configuring AI tools, who owns the information and digital material these systems generate, who presumes liability for actions or choices made by autonomous systems, and whether AI breakdowns, cyberattacks, or system failures are treated as force majeure occasions.

The absence of contractual assistance leaves individuals susceptible to run the risk of misalignment, unclear legal direct exposure, and potential insurance conflicts.

Legal Risk and Red Flags: How AI Might Complicate Building And Construction Liability

The legal implications of AI use in building and construction are ending up being more difficult to overlook. As artificial intelligence tools handle more decision-making roles across job lifecycles, industry specialists and legal observers alike are starting to consider how failures or misapplications of these technologies might trigger conflicts.

The question is no longer whether AI assists– it’s who gets sued when it’s wrong.

A range of hypothetical (however increasingly plausible) circumstances have actually surfaced within the market. For instance, a machine-learning job management platform could produce inaccurate schedule forecasts, disrupting critical-path preparation and possibly exposing contractors to liquidated damages. A BIM-integrated clash detection tool might neglect a significant dispute in between mechanical and electrical systems, demanding costly rework. Safety monitoring systems powered by AI may stop working to detect site hazards, resulting in injuries and liability exposure.

In addition, questions surrounding the ownership of AI-generated digital properties– especially when several parties contribute to their creation– might stimulate conflicts over use rights and intellectual property.

Broaden  Close Where AI Is Being Used in Construction

Close Where AI Is Being Used in Construction

Beyond these situations, there are wider structural risks construction teams ought to keep in focus. These consist of unproven dependence on AI outputs without human oversight, failure to divulge making use of AI in vital decision-making, dependence on third-party platforms that provide minimal warranties or indemnification, uncertain ownership of digital material produced by AI systems, and insufficient cybersecurity procedures for cloud-based tools managing delicate data.

These problems show a growing zone of legal and contractual obscurity. The tools might be virtual, but the exposure they create is extremely genuine. And with traditional agreements providing minimal guidance– numerous drafted before AI’s entrance into the building and construction lexicon– the need for updated legal structures is becoming increasingly immediate.

The Case for AI-Specific Contract Stipulations

Offered the rapidly developing technological landscape, building contracts must develop also. AI-specific provisions can provide a much-needed secure versus the legal uncertainties presented by algorithm-driven tools.

An efficient AI stipulation ought to consist of several core components. It must offer clear meanings– defining terms such as “expert system,” “autonomous systems,” and “artificial intelligence tools” to guarantee shared understanding. Disclosure requirements should oblige parties to reveal their usage of AI tools and supply notice of any material modifications during the course of work. Verification standards need to require licensed experts to review and approve all AI-generated outputs before usage or application.

One

That’s the number of standard contract templates presently mention artificial intelligence.

Liability and indemnity arrangements ought to assign obligation to the party picking or configuring the AI and need indemnification for damage caused by inappropriate use or breakdown. Copyright and information rights should specify ownership and allowed usage of AI-generated material, especially as it connects to reuse, modification, or post-project application. Finally, force majeure considerations should address whether AI-related blackouts or cyber occasions certify as excusable delays, and audit rights must allow evaluation of AI efficiency data and choice reasoning in the event of a disagreement.

Rewording the Rules for a Machine-Enhanced Market

AI is now a component on jobsites. Whether optimizing designs, forecasting risk, or managing security, these tools are embedded in the project lifecycle. Yet building agreements– long the market’s instrument for designating obligation and handling danger– often fail to acknowledge this truth.

Building agreements are overdue for a software application upgrade.

Specialists, subcontractors, developers, and design experts all stand to gain from proactive legal language that plainly specifies how AI is utilized, who is accountable for it, and what takes place when it stops working.

In a world where vital job decisions may be made by code instead of people, failing to resolve AI in legal terms is no longer an oversight– it’s a liability.

The most consequential factor to a task may not appear in the organizational chart or the contract’s list of parties. But if it’s forming designs, scheduling work, or handling website safety, its presence must be addressed. Construction agreements are overdue for a software application upgrade, and the AI provision might be the patch that keeps risk– and duty– in balance.